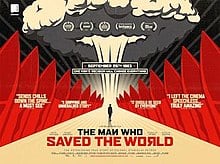

It was September 1983. Three weeks before, the Soviet air force had shot down a Korean airliner and Cold War tensions were running high. Stanislav Petrov, the hero of this story, was duty officer in the Soviet missile early warning centre at Oko, when the computers reported that a ballistic missile had been fired from the USA, closely followed by five more.

Within seconds, the system had gone through thirty stages of confirmation. The attack was real.

What was he to do? His training and military protocol demanded that he should report it. His colleagues who had trained entirely in the military (Petrov was trained as an engineer) certainly would have done so. There then would have followed the most tremendous nuclear holocaust, resulting in death and destruction on both sides. But he had a nagging doubt. He had been led to expect that any attack from the Americans would be overwhelming. Why fire only six missiles? What was the point?

So Petrov reported a false alarm, and thereby saved the world. It transpired that the system had been triggered by an unusual combination of clouds and sunlight as the Soviet satellite flew over North Dakota. He was praised to start with, but was eventually reprimanded for failing to organise the paperwork properly (tickbox, Soviet-style).

Then he was moved to a less sensitive position. Almost certainly, the NATO military would have acted the same way – missing the point, as tickbox does. The real point is that sometimes you have to break the rules or depart from the process to do a good job.

And in today’s tickbox world, that may often happen.

At the same time, tickbox is expanding everywhere. Judges in some US states are using predictive software to work out how likely it is that a guilty prisoner will reoffend so that they can impose the right sentence. Bear in mind that the prisoners will not yet have reoffended and that the algorithm puts a great deal of weight on their answers to questions like: ‘Is it ever right to steal to feed your children?’ The software tends to predict that black people are twice as likely to reoffend as white people.

The problem is that human beings need to provide some oversight, as Stanislav Petrov managed to do so heroically. The political problem is that tickbox can’t always tell the difference between what a group of people might need, sometimes for the good of the group as a whole, and what an individual might need.

In medicine, it may only be a matter of time before some individuals can be treated entirely by algorithm, but it is not clear that any tickbox system – however sophisticated – can ever treat everyone or provide a fair assessment of how likely it is that a criminal will abscond or reoffend, or that a small business owner will thrive or fail. In order to be fair, those kind of decisions require an element of trust, intuition and understanding of context.

This is the opposite direction of travel from that currently happening. That story is one that I included in my Tickbox book, and I couldn’t help thinking about it – not just in relation to the Russian invasion of Ukraine, but especially since Putin put his nuclear forces on ‘special alert’, whatever that means.

The problem here is exactly the same as the post office scandal – that when you are very senior in an organisation, you lose touch with reality. You tend to believe whatever figures are produced by computer programmes are true – in fact the tickbox delusion only really afflicts those who run the world.

This is increasingly a problem. “Computer-says-no culture runs the world,” said Marina Hyde in the Guardian about the postmasters and mistresses scandal:

“Today, technology is deferred to even in the face of human tragedy far more than it was 20 years ago. Spool onward in the timeline and you will find more and more examples of ways in which technology was deemed to know best. In 2015, it emerged that in one three-year period, 2,380 sick and disabled people had died shortly after being declared “fit for work” by a computerised test, and having their sickness benefits withdrawn. Today, bereaved parents are told that nothing can be done about the algorithms that pushed their teenage children remorselessly in the direction of content they believe ultimately contributed to them taking their own lives, even as a Facebook whistleblower recently said that firm was “unwilling to accept even little slivers of profit being sacrificed for safety…”

This has a clear implication for war – which is increasingly tickboxed – and it underlines the peril the world now finds itself…

We have a brief moment when everyone feels more proud and connected – thanks to the unprecedented feeling of unity across Europe, not just Germany and France but also Poland and Hungary.

But this is also now highly dangerous and, although we are in the early stages of this conflict now – and we don’t yet know how insane Putin has become – we need to peer ahead a little, if we can.

So what is likely to be on our minds, once the ‘gallant Belgium’ period is over and Liz Truss’s volunteer fighters will have been part of the Ukrainian international brigade for some weeks? I believe our own government may be facing complaints – rather as they were early in the Second World War – about their failure to pay anything towards protecting their own population.

If there is a tickbox issue about nuclear weapons on either side, it amounts to much the same thing. It is right that I should feel insecure for myself and my family – given what they are going through in the Ukraine – but is it right that I should feel quite as vulnerable as I do?